How Does AI SolutionOps Work?

In our last post, we introduced the concept of AI SolutionOps and explained why we invented it. We made a clear distinction between AI models and AI solutions (using simile: AI models are like “engines” and solutions are like “cars”) and unpacked the major components of AI-powered solutions (data curation, model execution orchestration, workflow integration, etc.).

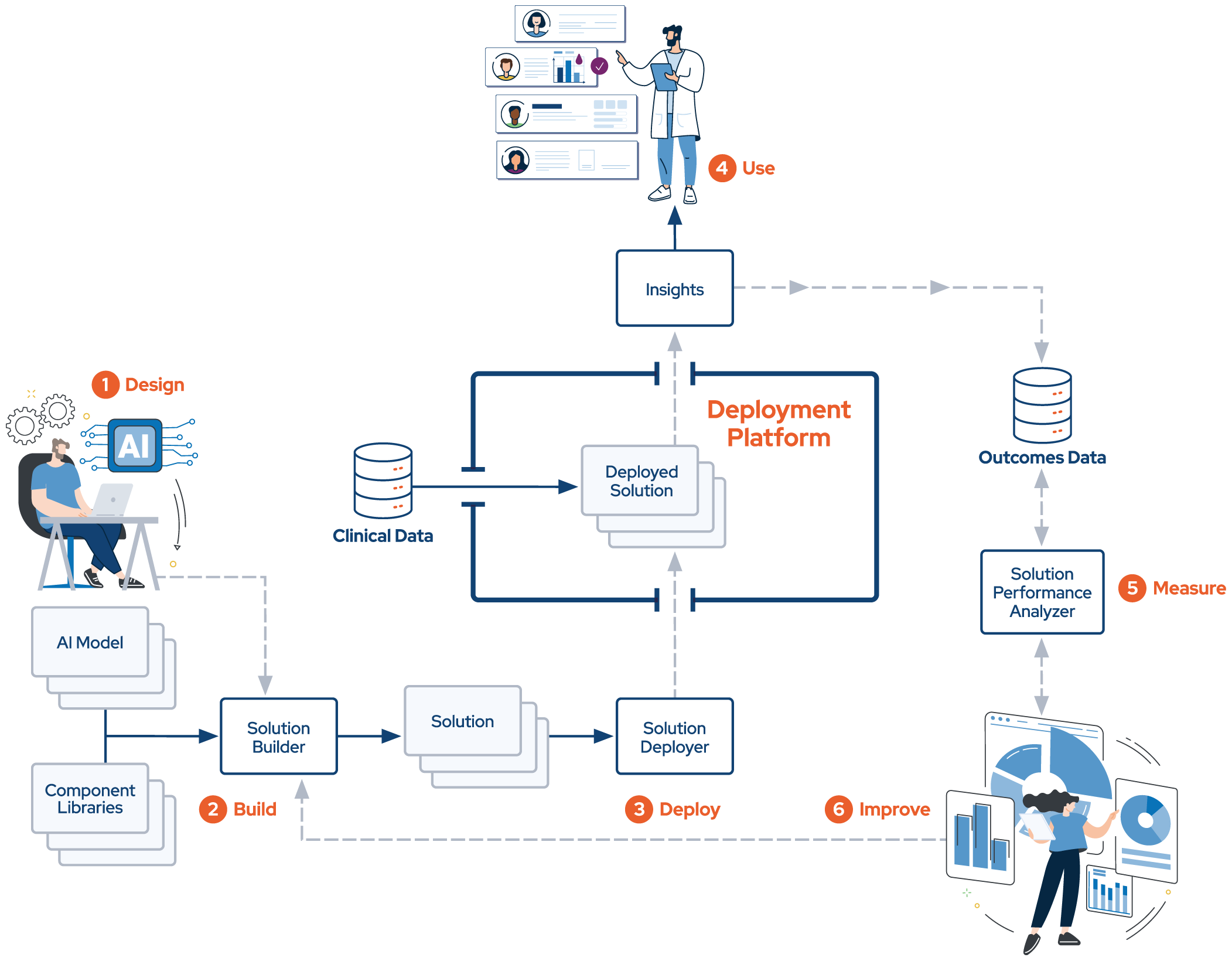

In this post, we’ll try to make AI SolutionOps more concrete by digging deeper into what it is and how it works. Let’s refer back to our trusty diagram to orient us:

From the diagram, you can see that the basics of AI SolutionOps are straightforward. The first part of the process follows an intuitive set of design, build, deploy, use steps to get from a clean sheet of paper to a useful solution that delivers (one hopes) real world value and impact. But why the “hope” caveat? Because we don’t know whether a solution is really delivering its intended impact unless we have a mechanism to measure and evaluate it. And, if we determine that we aren’t getting the intended impact, we need to remedy that.

Design

In the design phase, we envision what the solution will be, at a functional and technical level. But a successful design depends on a clear objective: we need to know going in what the target is. Or, to put it another way, what is the problem we are solving? For example, we may want to build a solution that helps reduce the occurrence of AFib-related strokes.

Once we know the target, we can think about the solution design. Following the old adage that it’s easier to get “from there to here” than “from here to there,” we prefer to start with envisioning how end users and stakeholders will interact with the solution and how it will integrate into existing clinical workflows. This helps us consider how we should surface the insights required to drive action and decision making and allows us to work backwards towards the required data, technical components, and AI model(s) needed to deliver the solution. All this adds up to what is, in effect, the “bill of materials” (the “BOM”) for the solution.

Build

After we have the BOM, we can build. AI SolutionOps provides component libraries that we can pull from to construct solutions without having to write code. We simply snap the components together (like Lego blocks) and configure them as necessary. If the BOM specifies one or more components that need to be built or modified to support the solution, our platform engineering team fires up their dev tools and gets it done.

Cars with no engines aren’t very useful, so a solution’s AI model(s) is a core component of the BOM. However, from the standpoint of the build process, the AI model looks like any other component that we snap into the solution. We have optimized AI SolutionOps so we can integrate virtually any kind of AI model and connect it into our data and workflow integration frameworks without writing code. This allows us to streamline the solution development process significantly.

We should also mention that, even though we don’t call it out explicitly in the diagram, all solutions we develop using AI SolutionOps undergo rigorous functional, user experience, performance, etc., testing. AI SolutionOps provides tools and processes to make that testing faster and easier.

Deploy

After we’ve built and tested a solution, it’s ready for prime-time: we can deploy it to providers so they can use it and get value out of it. We engineered AI SolutionOps to simplify solution deployment in the highly heterogeneous technical environments of individual provider organizations. AI SolutionOps includes flexible data and workflow integration frameworks that can “shape shift” to the needs of individual operating environments.

AI SolutionOps is not just tools and processes: it also includes a robust, scalable run time deployment platform that:

- Maintains separate, secure production environments for each end customer

- Never co-mingles data

- Is delivered in the cloud and scales infinitely

- Offers an advanced “zero trust” security and access control framework

- Is Soc 2 Type 2 certified and exceeds typical healthcare provider security and data protection requirements

Use

Our designed, built, and deployed solution is now ready for production use. Most clinical AI solutions are pretty simple operationally:

- Gather the data the solution requires, both for model execution and solution context

- Curate the data

- Feed the data to one or more AI models

- Orchestrate execution of the model(s)

- Interpret the insights generated by the model(s) and make them human understandable

- Surface the insights into the workflow: right place, right time, right context, right stakeholder

AI SolutionOps includes all these capabilities and more. It can integrate data from almost any upstream modality. It supports multi-modal models and can execute models sequentially, in parallel, or based on contingencies or defined business logic. And it supports integration with virtually any kind of downstream application (EMRs, scheduling systems, departmental information systems, etc.) and/or provides its own application framework.

Measure and Improve

If you build it, will they come? Or, better said, if you deploy it, will they use it and get value out of it?

Given the hype around AI and given how new clinical AI solutions are to healthcare, it’s vitally important that we measure and understand their real-world performance and impact, and that we share that information with sponsoring stakeholders. For AI powered solutions to gain traction in healthcare, we need to show that they deliver meaningful clinical and financial ROI.

AI SolutionOps includes robust mechanisms to collect downstream information from EMRs and other data modalities that help us visualize and understand what happened to patients after their clinicians interacted with solutions’ surfaced insights. For example, did a patient present in response to a scheduling event? Did they get an appropriate test or procedure? Was a diagnosis confirmed? What course of treatment was prescribed or ordered?

And, if an aggregation of upstream performance measurements reveals that the solution is not performing as expected – at an individual provider, location, geography, etc., level – AI SolutionOps provides data and insights into how to make it better – for the engine(s) as well as the car.

The future of AI SolutionOps

To say we are excited about the potential of AI SolutionOps would be an understatement. Our mission to revolutionize care delivery through the practical application of clinical AI depends on it completely: we simply don’t feel we can support that mission without it.

In upcoming posts, we’ll explore more concrete examples of how we used AI SolutionOps to build and deploy high value, AI powered solutions. Additionally, we’ll share some insights into where we think AI SolutionOps may be headed. Until then!